This post is a follow up on kaggle’s “How to get started with data science in containers” post.

I was having some trouble setting up the environment on windows so I decided to document my steps\discoveries.

I also (like to think that) I improved the script provided a little by preventing multiple containers from being created.

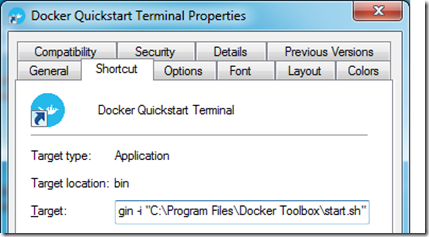

As the post above indicates, the first thing you have to do is to install Docker: https://docs.docker.com/windows/

Because the Docker Engine daemon uses Linux-specific kernel features, you can’t run Docker Engine natively in Windows. Instead, you must use the Docker Machine command, docker-machine, to create and attach to a small Linux VM on your machine.

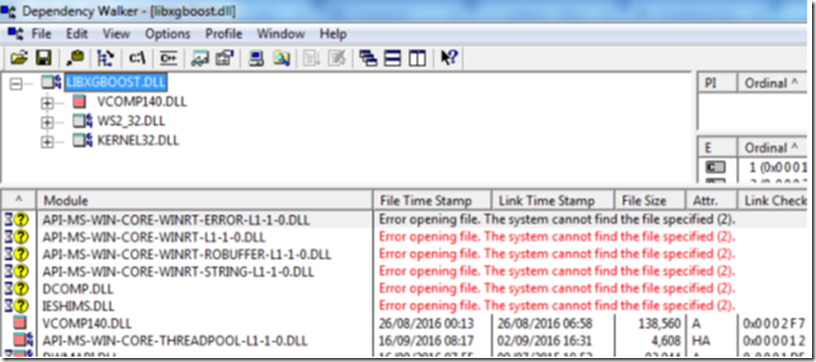

The installer is really strait forward and after it is completed, it will have a link to the Quick start Terminal in your desktop. When you run it, the “default” machine will be started, but as is mentioned on the kaggle’s post, “ it’s quite small and struggles to handle a typical data science stack”, so it walks you through the process of creating a new VM called “docker2”. If you followed the steps, you will be left with two VMs:

To avoid having to run

docker-machine start docker2

eval $(docker-machine env docker2)

to change the VM every time you launch your CLI, you can edit the start.sh script it calls and change the VM’s name so it will also start the docker2 VM

When you login you should be in your user folder “c:\users\<your_user_name>.

You can see that I already have Kaggle’s python image ready to be used and that I have no containers running at the moment:

To make thing simpler (and to avoid seeing all the files we normally have on our user folder), I created and will be working inside the _dockerjupyter folder.

Inside that folder, I created a script called StartJupyter, which is based on the one found on the tutorial, but with a few modifications.

There is of course room for improvement on this script – like making the container name, the port and maybe the VM name (in case you chose anything else than “docker2”) parameterized.

CONTAINER=KagglePythonContainer

RUNNING=$(docker inspect -f {{.State.Running}} KagglePythonContainer 2> /dev/null) #redirect to STDERR if can't find the KagglePythonContainer (first run)

if [ "$RUNNING" == "" ]; then

echo "$CONTAINER does not exist - Creating"

(sleep 3 && start "http://$(docker-machine ip docker2):8888")&

docker run -d -v $PWD:/tmp/dockerwd -w=/tmp/dockerwd -p 8888:8888 --name KagglePythonContainer -it kaggle/python jupyter notebook --no-browser --ip="0.0.0.0" --notebook-dir=/tmp/dockerwd

exit

fi

if [ "$RUNNING" == "true" ]; then

echo "Container already running"

else

echo "Starting Container"

docker start KagglePythonContainer

fi

#Launch URL

start "http://$(docker-machine ip docker2):8888"

The two main modifications are the “-d” flag that runs the container on the background (detached mode) and the “– name” that I use to control the container’s state and existence. This is important to avoid creating more than one container, which would cause a conflict (due to the port assignment) and leave it stopped. This is what would happen:

The ”-v $PWD:/tmp/dockerwd” will map your current working directory to the “:/tmp/dockerwd” inside the container so Jupyter’s initial page will show the content of the folder you are at.

Running the code will create and start the container in detached mode:

It will also open the browser on your current working directory where you’ll probably only see the StartJupyter script you just ran. I’ve also manually added a “test.csv” file to test the load:

By creating a new notebook, you can see the libraries’ location (inside the container), see that the notebook is indeed working from /tmp/dockerwd and read files from your working directory (due to the mapping made) :

Now, since we started the container in detached mode (-d), we can connect to It using the exec command and by doing so, we can navigate to the /tmp/dockerwd folder, see that our files are there and even create a new file, which will of course be displayed on the Jupyter URL:

docker exec -it KagglePythonContainer bash

We can also see that we have 2 different processes running (ps –ef):

FYI: you will need to run

apt-get update && apt-get install procps

to be able to run the ps command

At last, as mentioned before, the script will never start more than one container. So, if for some reason if you stop your container (if your VM is restarted for example), the script will just fire up the container named “KagglePythonContainer”, or it wont do anything if you call the script with the container already running. In both cases the Jupyter’s URL will always be displayed:

In order to understand docker, I highly recommend these tutorials:

https://training.docker.com/self-paced-training